Musicbusinessworldwide.com

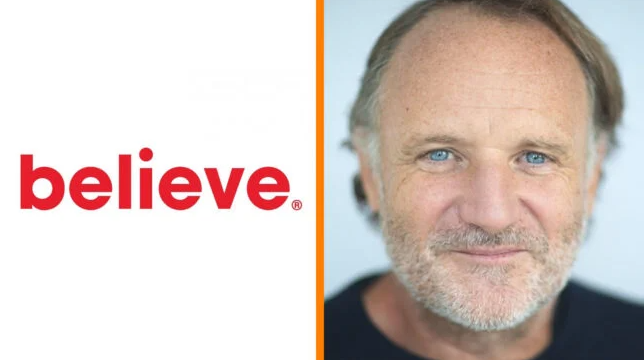

Believe is taking a sharper stance in the AI arms race, unveiling an updated generative AI policy that both restricts unlicensed content and embraces new, licensed creative tools. The move reflects a broader industry pivot: separating AI innovation from what executives increasingly view as legal and reputational risk.

At the center of the update is enforcement. Believe, and its DIY distribution platform TuneCore, will now automatically block songs created using unlicensed AI platforms, which the company labels “pirate studios.” Using detection systems that it claims are now 99% accurate, Believe says it can identify not just whether a track is AI-generated, but which model created it.

If that model lacks proper licensing, the release is stopped before it reaches streaming services.

CEO Denis Ladegaillerie has framed the policy as both a legal safeguard and a quality-control measure, warning that distributors who continue to push unlicensed AI music risk future litigation exposure. He has also called on streaming platforms to adopt similar protections, arguing that failure to act could create long-term liability.

At the same time, Believe is deepening its investment in AI’s upside. The company has signed new licensing agreements with emerging AI firms ElevenLabs and Udio, adding to a growing ecosystem of partnerships between rights holders and tech developers. The strategy is clear: block unlicensed inputs, but support tools that compensate creators and operate within industry frameworks.

This dual approach builds on Believe’s “Responsible AI” principles first outlined in 2023, balancing copyright protection with innovation. While AI-generated music still represents a tiny share of total streams, its rapid growth has raised concerns around fraud, oversupply and artist attribution.